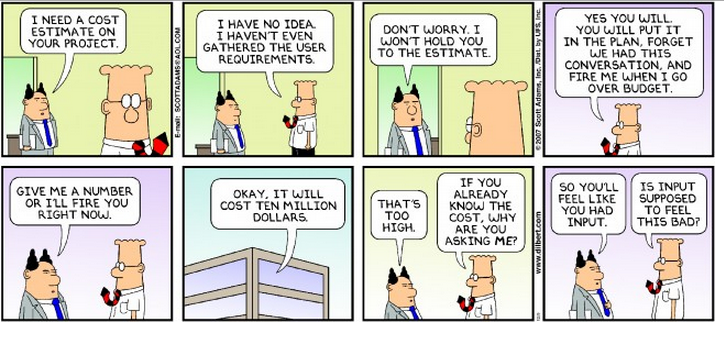

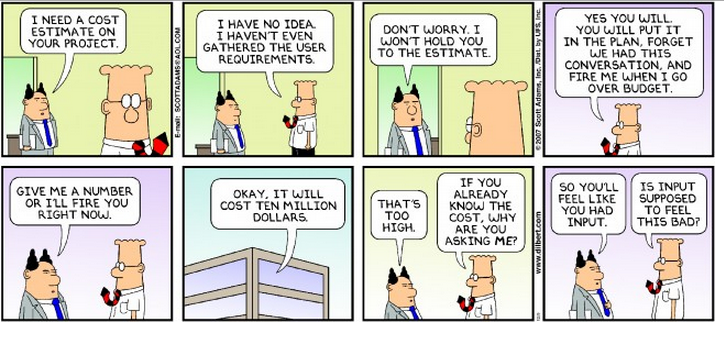

A Product Owner, or development team will often be asked for an estimate or a forecast for a product; a feature; an iteration; or a story. But when you go to a team member and ask them it is not uncommon for the colour to drain from their face, twitching to start, and their pulse to race. Past experience says that the next words they speak will haunt them for the foreseeable future, maybe even for the rest of their career. It is only a rough estimate you say to reassure them, but they just know you have other plans and the fate of mankind lies in the balance. Or so it seems.

The difference between a forecast and an estimate.

For most practical purposes there are only subtle differences, the main one being that a forecast deals exclusively with the future. When I think of the two I tend to think of an estimate as information and a forecast as expectation. It is likely that I will use estimates to create my forecast and I may use multiple estimates and even apply other factors to derive my subjective forecast. Whereas an estimate is generally an isolated objective assessment.

Both have huge degrees of variation; accuracy; precision; and risk factors, but in some instances it may very well be that my estimate and my forecast are the same for all practical purposes.

For example, If I was asked to estimate a journey, I may say that it is between 15 and 45 minutes and on average it is 30 minutes. If I am asked to forecast when I will arrive, that requires knowing not only the estimate but the time the journey starts and if there are other factors such as a need to stop to get fuel, or food. I may also add a certain weighting to the journey based on the time of day. Thus my forecast, whilst based on an estimate may not be the same as the estimate. Other times though I may simply take the estimate and it will become my forecast.

In practice though the terms are used interchangeably and likely it really makes very little difference, except when it comes to expectations. If I ask “How long will this take” I am asking for an estimate, if I ask “When will this be done” I am asking for a forecast, both are fine so long as everyone knows what is meant by the question.

Forecasting is misunderstood

Forecasting is guesswork, it may be scientific guesswork and it may be based on past experience and metrics and clever projection tools, but it is a guess. You will be wrong far more often than you are right. The more professional and clever and precise the forecast looks the more confidence you may instill in that guess. But it is still a guess, and when your audience gains undue confidence in your guess they have a tendency to believe it as fact not forecast. It might be that a guess is all that is needed but dressing a guess up and delivering it with confidence may create a perception of commitment.

A forecast is commitment (in the eyes of the one asking)

In normal circumstances by giving a forecast there is an implied commitment, even if you give caveat after caveat, and at any point where you even hint at a commitment to delivery to a fixed scope, fixed cost AND fixed date you are setting yourself up for disappointment. And sadly that is what a forecast is seen as by most people.

By all means work to a budget or a schedule or even a scope (although that is very likely to vary) but ideally ONLY one and never all three.

Understanding why a forecast is needed.

There are many reasons why people ask for forecasts, and the why is the most crucial aspect of the process. Understanding the why is the first step to providing the right information, and hopefully changing the conversation. Forecasting for stories and features is both more reliable and more accurate but the project/product level forecasting is where there is the most confusion and least understood purpose.

If possible, try to change the question of “When?” in to an understanding of “Why?”

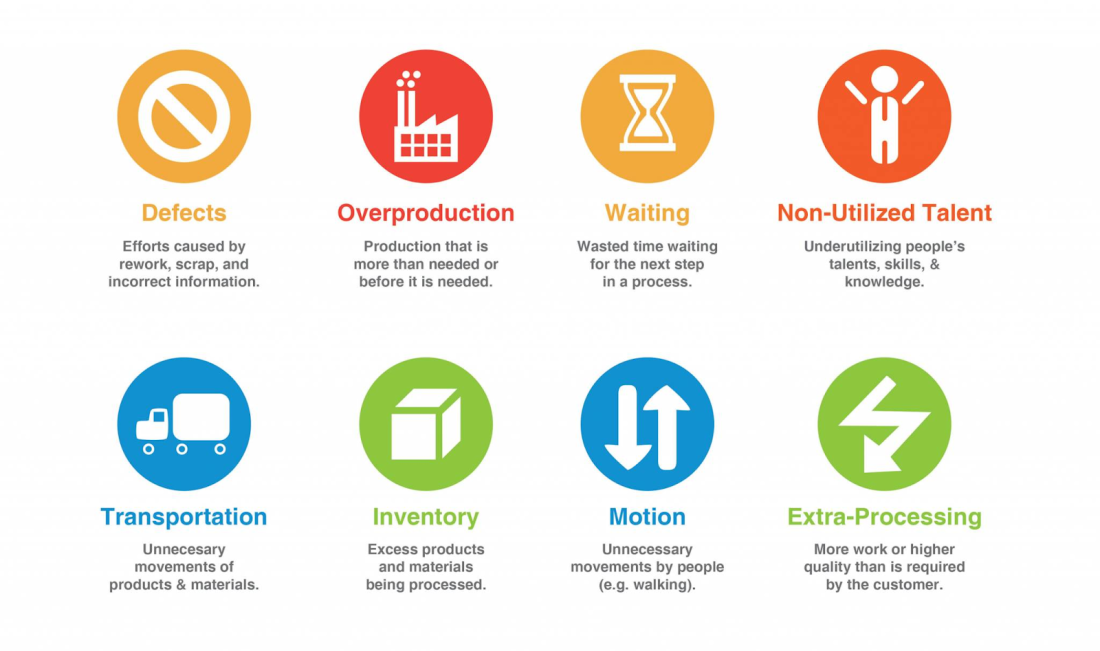

Generally speaking we gather information to help us make decisions. If the information will have no bearing on our decisions then the gathering of that information is wasteful. Forecasting all too often falls into this category. We take time and effort to produce a forecast and yet the forecast has no bearing on any decision, that effort was wasted, or more often the level of detail in the forecast was unnecessary for the purpose for which it was used.

Making predictions of unknown work based on incomplete information and a variety of assumptions leads to poor decisions, especially when the questions being asked are not directly reflective of the decisions being made.

In my experience the Why? generally falls into three broad categories:

- How long will this take?

- How much will this cost? and

- simple curiosity/routine.

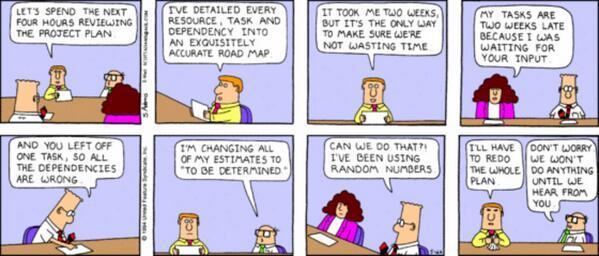

But most people don’t ask why, they spend time creating a forecast and present it without knowing how it will be used. Some people asking for a forecast/estimate may not even know why they are asking, they just always get a forecast. But let us delve a little deeper.

How long will this take?

Why do you need to know? Some typical answers are:

- I need to plan

- I have dependencies

- I need to prioritize

- I have a deadline

How much will this cost?

Why do you need to know? Some typical answers are:

- I want to know if I will get a good Return on Investment

- I need to budget

- I need to prioritize

- I have limited available funds

Curiosity/routine

- We always ask for a forecast, I need to put something in my report

- I want reassurance that you know what you are doing

- I want to know if my project is on track

What you will notice about these questions is that when you ask why, the first request for a forecast suddenly doesn’t make sense anymore, they are not really interested in the forecast itself, but in some other factor that they can infer from the forecast. If the intent is clear then the question can be tailored to get the required information in a better way.

e.g.

I need a forecast - Why?… I need to know when I can allocate staff to the next product/project.

In this case would a simple high level guess be sufficient? I feel confident that staff won’t be available for the next 3-6 months, in 3 months let’s review, I’ll have a better idea then…

I could put a lot of effort into a detailed forecast but an instinctive response may give all the information needed, saving us a lot of trouble.

or

I need a forecast - Why?… I want to ship this to maximize the Christmas shopping period - or I want to time the launch for a trade show etc.

This isn’t a request for a forecast, this is a request for an assurance that there will be something suitable available for a particular event/date. I can give you an assurance and confidence level without a detailed forecast, I may even change the priority of some features to ensure that those needs are factored into the product earlier. Or can de-scope some features to meet a certain date.

or

I need a forecast - Why?… I have limited funds available, and I want to know when I can start getting a return on this investment.

This isn’t a request for a forecast, it is a request to plan the product delivery so that revenue can be realized sooner and for the least investment. It may be possible to organise delivery so that future development is funded from delivering a reduced functionality product early. Or that development is spread over a longer period to meet your budget.

or

I need a forecast - Why?… I need to budget, The way this company works I must get approval for my project expenses and staffing in advance so I need to present forecasts of costs and timelines. This answer is twofold, first - can you challenge the process? It might be better to have a fixed staffing pool and prioritize products/projects such that the most important ones are done first and then move on to the next, in which case the forecast for this is irrelevant, it is a question of prioritization. Or if the issue is ensuring staffing for the forthcoming year could I simply say whether this product will not be completed in the next budget year?

or

I need a forecast - Why?… I want confidence that you know what you are doing. This is not a request for a forecast it is an assessment of trust in the team. There are many more reliable ways to ascertain confidence and trust in a team than asking for a forecast.

or

I need a forecast - Why?… I have a dependency on an aspect of this project. This may not be a request for a forecast of this project, but more a request to prioritize a dependency higher so it is completed sooner to enable other work to start.

or

I need a forecast - Why?… I want to know if my project is on track. Essentially what you are saying is that I want to track actual progress of work done, against a guess made in advance that was based on incomplete information and unclear expectations. And I will declare this project to be ahead or behind based on this. I am sure those of you reading this will know that what you are measuring here is the accuracy of the original guess, not the health of the project. But we have been doing that for decades so why stop now?

finally the closest to a genuine need for a forecast

I need a forecast - Why?… I need to prioritize or I want to know if I will get a good Return on Investment.

Both of these are very similar questions, but are really requests for estimates not forecasts a rough estimate helps me gauge the cost and when I evaluate that against my determination of the value expected to be gained from the project it may help me decide whether the project is worth doing at all or if there are other projects that are more important. E.g. If it is a short project it may impact my decision on priorities

But even here it not the estimate that has value it is just information that helps me evaluate and prioritize. If I already know that this project has huge value and will be my top priority does forecasting aid with that decision?

When does it make sense to forecast?

Listing those questions above it seems like I am suggesting that a request for a forecast is always the wrong question and is never really needed. And it is true that I did struggle to come up with a good example of when it makes sense to do a detailed project level forecast that included dates or any type of scheduling expectations.

It may be necessary for a sales contract, to have a common set of expectations. Although I would very much hope that sales contract for agile projects are for time and materials and are flexible in scope and dates, if not cost too. So for the purposes of setting expectations and in negotiations I can see that there is value in an estimate, although I do still wonder if a detailed forecast adds anything here that a reasonable cost estimate doesn’t cover. If possible I would rather work to an agreed date or an agreed budget than suggest a forecast that may lead to false expectations.

But the reality is that sometimes your customer does want one and wont tell you why, or doesn’t know why. Some customers (and managers) are willing to accept that a forecast will cost time and money and the more detailed it is the more it costs, and of course being more detailed may not make it any more accurate.

More detailed investigation for your forecasting is likely to build greater confidence but may not be more accurate and you should ask the question “will more detail have a material impact on your decisions?”, and if the extra effort wouldn’t affect your decision then it is just waste.

I would caution that if there is no other alternative and a forecast is made, that it is revised regularly and transparently, the sooner the forecast is seen as variable the more useful it is. There is so much assumption tied to a forecast that it becomes a ball and chain if there is not an expectation of it changing and so it must be refreshed regularly to prevent the early assumptions being seen as certainty, or they will lead to disappointment later.

Short term forecasting

The real value in forecasts is when the forecast is for a short frame of time. Over the short term we can have much more confidence in our forecasts, especially if we have been working on this project for a while and have historic information we can base our forecast on. There are fewer assumptions and less variables.

- Can you forecast which features are likely to be included in the next release?

- If I add a new feature now can you estimate a lead time for this?

- Can you give me an estimate of how much this feature would cost?

By limiting the scope of the forecast to an areas where we have more confidence in our expectations the forecasts become more meaningful and whilst there is still a danger they are seen as commitments the risk is mitigated by the shorter time frame.

Alternatives to forecasting

Many of the questions above could have been resolved with much less effort than a detailed project level forecast. In most cases we could achieve sufficient accuracy for decision making with a high level estimate. E.g. A product like that is similar to ‘x’ therefore I’d estimate 4-8 months for a small team. Or as a rough estimate 12-18 months for two teams and calculate costs accordingly.

These estimates are certainly broad but if you have confidence in your teams and you believe they will use Agile principles to get value early and are able to communicate progress and be transparent with issues then I see no issue with broad estimates. It is sufficient to allocate staff and resources, to prioritize, to schedule and to determine Return on investment decisions.

For the other questions you may achieve far better answers through the use of Product/feature burndown charts, user story mapping, or even simple high level Road maps. These tools provide useful information which can be used for managing expectations, identifying dependencies and visualising the progress of a product. And crucially - aid in setting priorities and keeping the progress transparent.