I feel a little on the defensive recently, I have heard a Product Owner (ex PM) complaining that he could get it done quicker with Waterfall, and I have heard a number of people asking what was wrong with the old way and why Agile?

Short-term memories, fear of change - especially for a very large project manager community - teething troubles with the transition, and a variety of other factors are probably behind it. But long-story short I was asked if there were any metrics on the comparison between Agile and Waterfall that we could use to ‘reassure’ some of the senior stakeholders.

After a bit of research I found an interesting survey: Dr. Dobb’s Journal 2013 IT Project Success Survey. Posted at www.ambysoft.com/surveys/

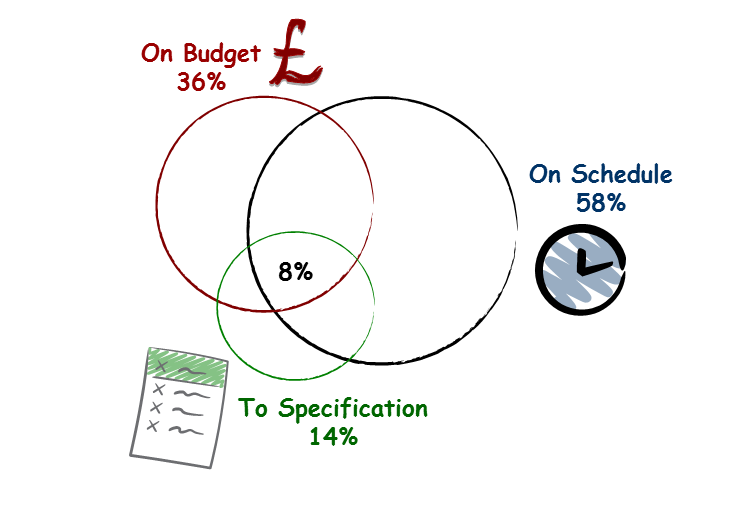

173 companies were surveyed: The companies were asked how they characterised a successful project. Only 8% considered success to be On Schedule, On Budget AND to Specification. Most classed success as only one or two of these criteria.

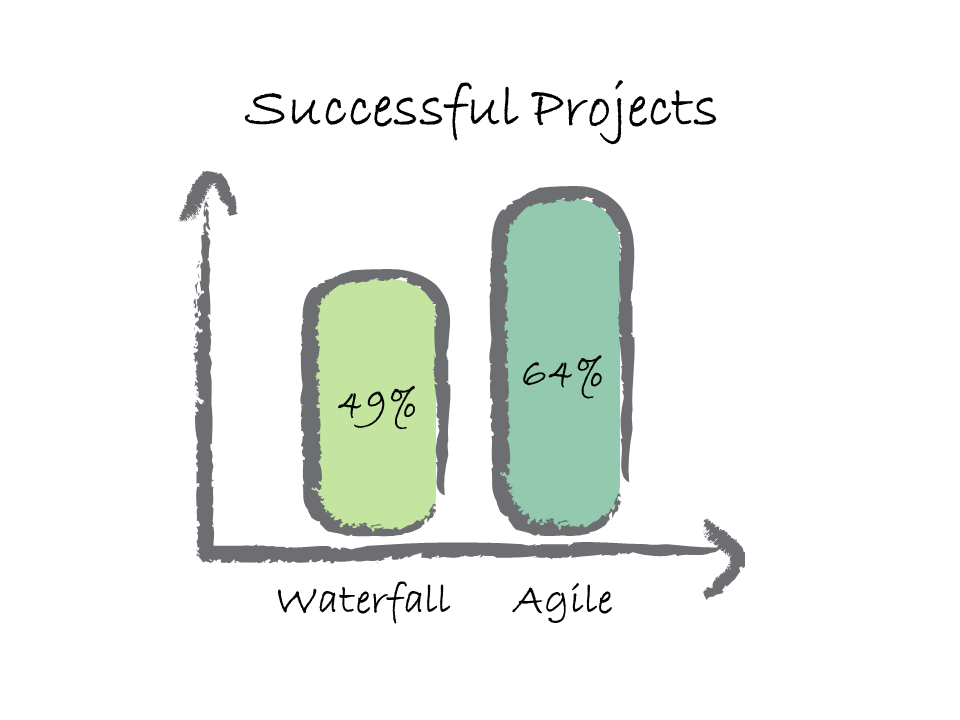

Based on the criteria listed above and even knowing that success may be just one of those criteria, only 49% of Waterfall projects were considered successful compared with almost 2/3rds of Agile projects considered outright successes.

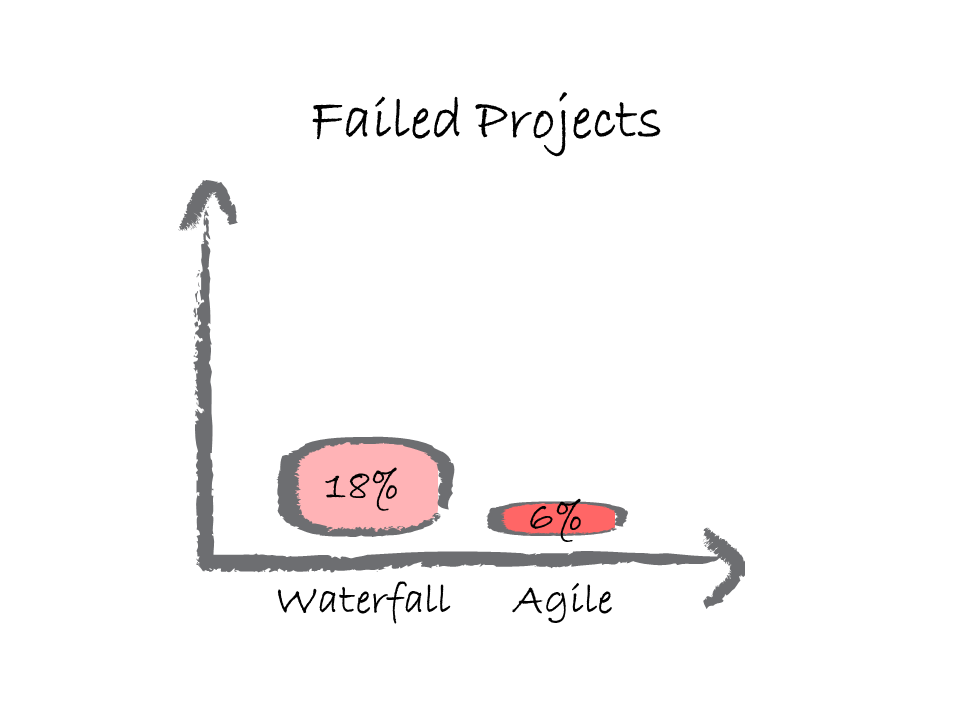

When considering failed projects (projects that were never finished) nearly 18% of Waterfall projects were deemed failures compared to only 6% of Agile projects.

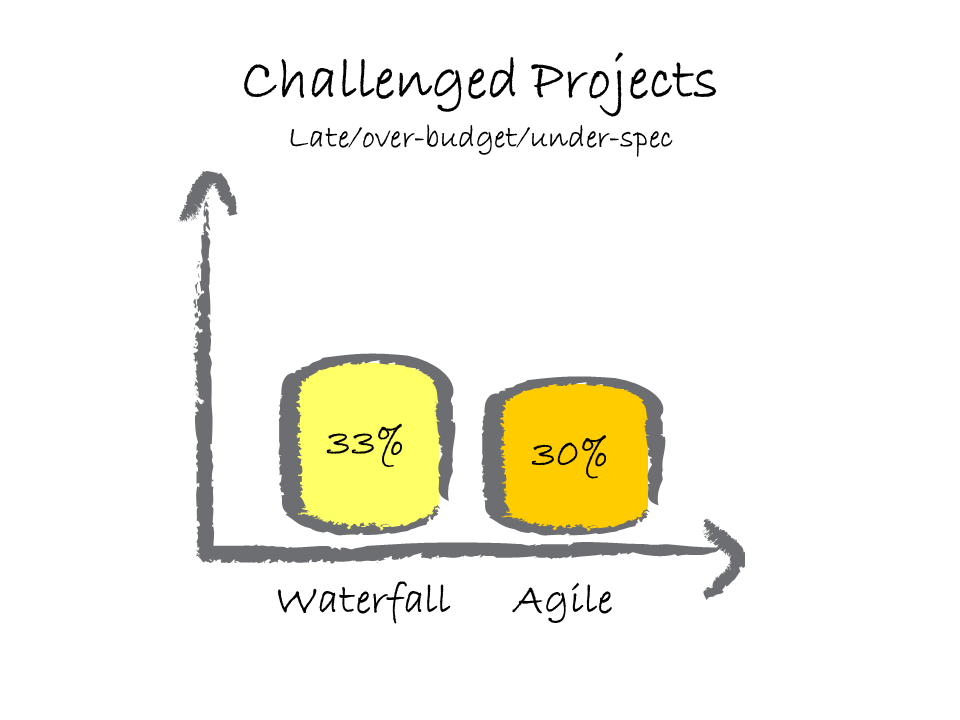

Finally Projects that were considered challenging in that they were not deemed successes but were eventually completed.

Ultimately in my opinion “Why agile?” is the wrong question. The questions should be “How can we improve?” I believe that Agile is a philosophy rather than a Methodology. I’d go so far as to suggest that if an organization has a desire to continually improve and follows through on that, then they are ‘Agile’ regardless of the methodology they use. And a company that follows an Agile framework without a desire to improve is not.

In other words ‘Agile’ is the answer to the question not the question itself. If done right and done with the right intentions Agile can help many organizations improve and improve further. Agile is not a destination.